Difference between revisions of "Principal Component Analysis"

Jump to navigation

Jump to search

Kevin Dunn (talk | contribs) m (→Update) |

Kevin Dunn (talk | contribs) |

||

| Line 67: | Line 67: | ||

* Some optimization theory: | * Some optimization theory: | ||

** | ** How an optimization problem is written with equality constraints | ||

** | ** The [http://en.wikipedia.org/wiki/Lagrange_multiplier Lagrange multiplier principle] for solving simple, equality constrained optimization problems. ('''''Understanding the content on this page is very important'''''). | ||

* Reading on [http://literature.connectmv.com/item/12/cross-validatory-estimation-of-the-number-of-components-in-factor-and-principal-components-models cross validation] | * Reading on [http://literature.connectmv.com/item/12/cross-validatory-estimation-of-the-number-of-components-in-factor-and-principal-components-models cross validation] | ||

Revision as of 19:45, 20 September 2011

| ||||||

|

Video timing |

||||||

| ||||||

| ||||||

|

Video timing |

||||||

| ||||||

| ||||||

|

Video timing |

||||||

| ||||||

Class notes

<pdfreflow> class_date = 16 September 2011 [1.65 Mb] button_label = Create my projector slides! show_page_layout = 1 show_frame_option = 1 pdf_file = lvm-class-2.pdf </pdfreflow>

- Also download these 3 CSV files and bring them on your computer:

- Peas dataset: http://datasets.connectmv.com/info/peas

- Food texture dataset: http://datasets.connectmv.com/info/food-texture

- Food consumption dataset: http://datasets.connectmv.com/info/food-consumption

Class preparation

Class 2 (16 September)

- Reading for class 2

- Linear algebra topics you should be familiar with before class 2:

- matrix multiplication

- that matrix multiplication of a vector by a matrix is a transformation from one coordinate system to another (we will review this in class)

- linear combinations (read the first section of that website: we will review this in class)

- the dot product of 2 vectors, and that they are related by the cosine of the angle between them (see the geometric interpretation section)

Class 3 (23 September)

- Least squares:

- what is the objective function of least squares

- how to calculate the two regression coefficients

- understand that the residuals in least squares are orthogonal to

- Some optimization theory:

- How an optimization problem is written with equality constraints

- The Lagrange multiplier principle for solving simple, equality constrained optimization problems. (Understanding the content on this page is very important).

- Reading on cross validation

Update

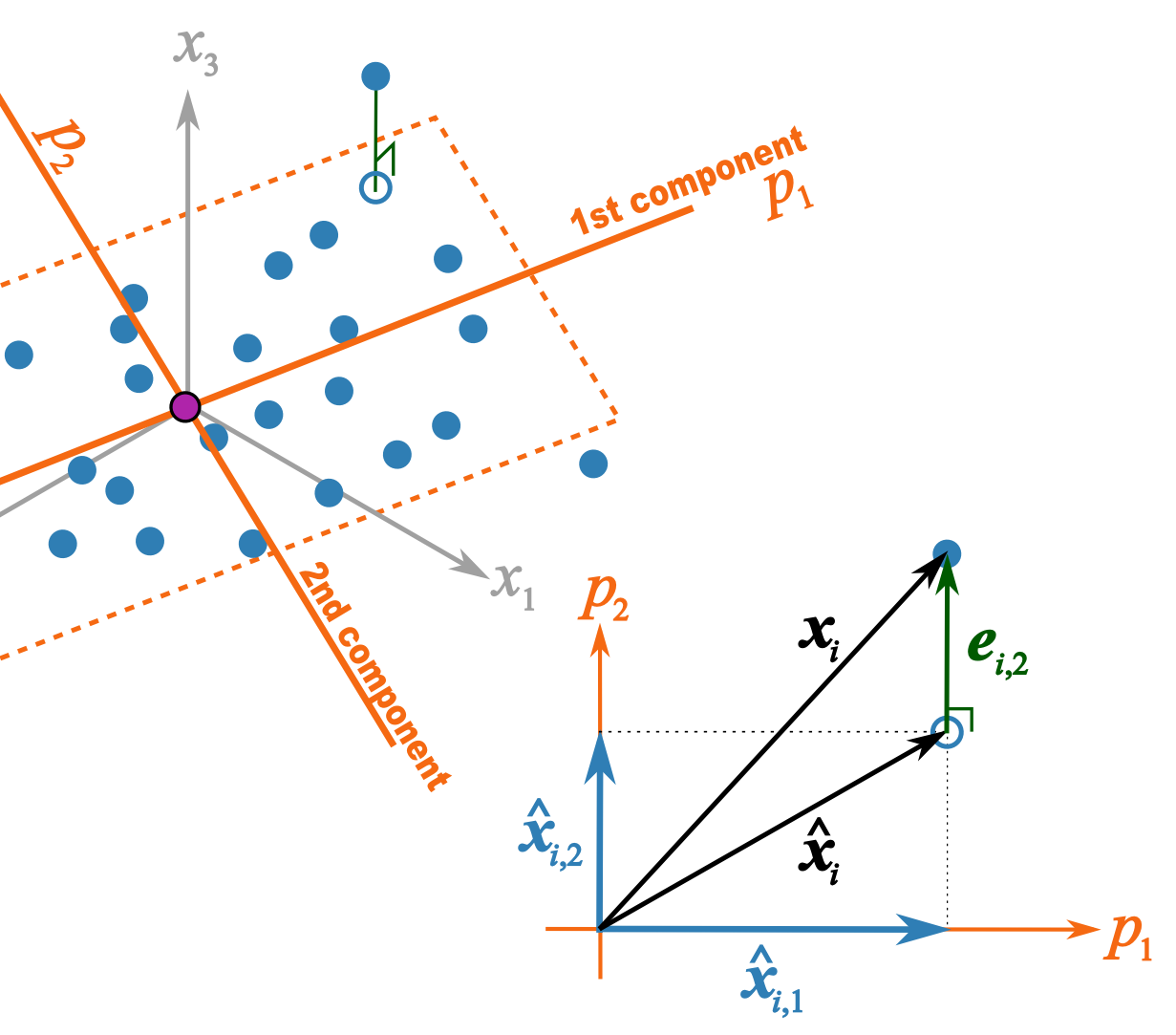

This illustration should help better explain what I trying to get across in class 2B