5.9.1. Half fractions¶

A half fraction has

Experiment |

A |

B |

C = AB |

|---|---|---|---|

1 |

|||

2 |

|||

3 |

|||

4 |

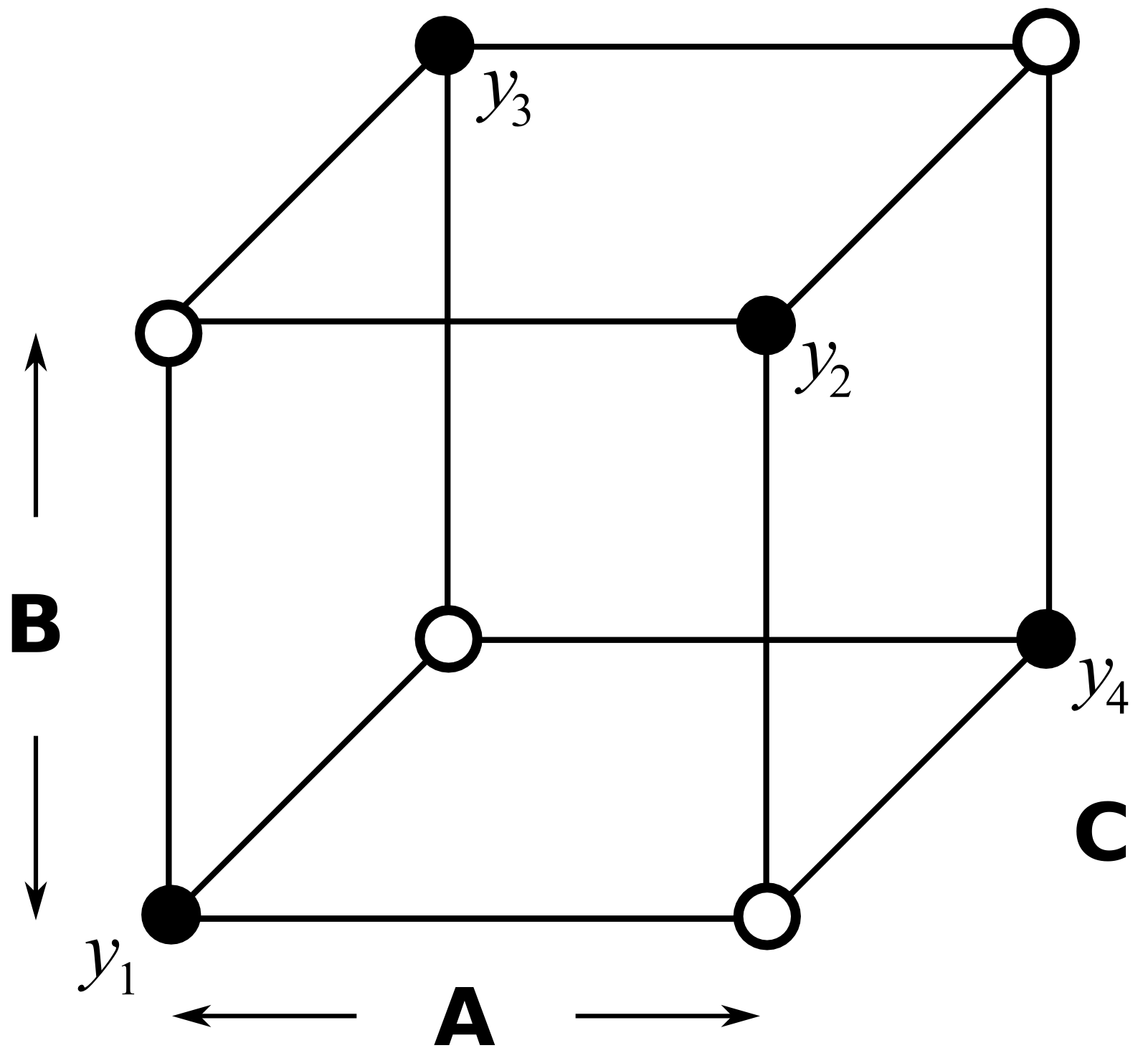

So this is our half-factorial designed experiment in 3 factors, but it only requires 4 experiments as shown by the open points in the figure. The experiments given by the solid points are not run.

What have we lost by running only half of the full factorial? Let’s write out the full design and matrix of all interactions, then construct the

Experiment |

A |

B |

C |

AB |

AC |

BC |

ABC |

Intercept |

|---|---|---|---|---|---|---|---|---|

1 |

||||||||

2 |

||||||||

3 |

||||||||

4 |

Before even constructing the

The least squares model would be:

The

For these reasons the least squares model cannot be solved by inverting the

To resolve this problem we can reformulate the model to obtain independent columns, grouping together the columns which are identical. There are now 4 equations and 4 unknowns:

Writing it this way clearly shows how the main effects and two-factor interactions are confounded.

I + ABC

A + BC : this implies estimates the A main effect and the BC interaction

B + AC

C + AB

It means we cannot separate, for example, the effect of the BC interaction from the main effect of A: the least-squares coefficient is a sum of both these effects. Similarly for the other pairs. This is why we say the factor A is confounded with the two-factor interaction BC. Factor B is confounded with AC, and factor C is confounded with AB. Also the intercept is not a pure estimate of the intercept; it is confounded with the 3-factor interaction ABC.

This is what we have lost by running a half-fraction: the benefit of doing fewer experiments is paid by the price of confounding within the factors we estimate.

We introduce the terminology that A is an alias for BC, similarly that B is an alias for AC, etc, because we cannot separate these aliased effects.